After upgrade to OneFS 9.7.1.4, there is a feature enable to use Support Assist for dial in and alerting EMC. It will be mandatory in the future release of OneFS.

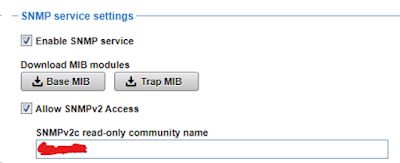

We have latest version of SCG to monitor the Isilon. So, we decide to enable Support Assist via Gateway topology.

Links below give you the info about Support Assist for Isilon and how to enable Support Assist in Isilon.

https://infohub.delltechnologies.com/sv-se/p/onefs-supportassist-provisioning-part-1/

https://infohub.delltechnologies.com/sv-se/p/onefs-supportassist-provisioning-part-2/

For CLI to migrate to Support Assist, see EMC Article Number: 000225888.

https://www.dell.com/support/kbdoc/en-uk/000225888/powerscale-how-to-migrate-from-secure-remote-support-srs-to-supportassist

Since there is existing SCG monitoring Isilon, the only thing I have to add is a firewall rule to allow traffic from all Isilon nodes to both SCG GW port 9443.

It will take up to 24 hours for the provisioning to complete. See EMC KB Article Number: 000232371

https://www.dell.com/support/kbdoc/en-us/000232371/powerscale-support-assist-provision-gets-stuck-in-incoming

======================================================================

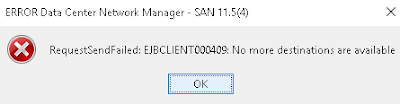

Support Assist is not sending alerts back to EMC.

Check from SCG and see connectivity is bad but CLI is ok. Have Dell dial in without issue. After going back and forth, it is actually a bug. The connection will stay up for few days to few weeks then it will not dial back to EMC. See EMC KB Article Number: 000348244

PowerScale: SupportAssist Disconnects SCG and CIQ - APEX AIOps Due to Crispies Lock on Node

We decide not to upgrade and stay in 9.7.1.4. So, support has to follow the kb to apply the workaround.

The other bug is email alert once a month to remind you the cluster is not connected back to EMC via eSRS like below. After migrating to SupportAssist, it looks like eSRS is now shutdown in Isilon. Check with support and this can be ignored since Isilon is now monitored by SupportAssist. Wonder if this will be fixed in future release.

"This cluster is not connected back to EMC via ESRS.

Configuring connectivity to EMC via ESRS is a secure

method to improve the overall reliability of your cluster and reduce the time

it takes to resolve any issues the cluster may develop."